From Humanoid Robots To Romantic Chatbots, Here’s Why AI Is Bad News For Relationships

You, Me & Chat GPT: Unpacking the role AI will play in our relationships

Future Minds newsletter breaks down the science of the mind and brain into short and easy-to-digest insights and actionable take-homes. Sign up and join the many others who receive it directly in their inbox.

Hello, dear readers!

I apologise that it’s been a while between newsletters. As you might have seen, I recently returned from India where I was doing some fascinating research on extracognitive abilities. We certainly saw some paradigm-shifting things that defy explanation — and I look forward to sharing some of those insights with you here soon.

Today, we’re talking about a topic I feel incredibly strongly about, and that’s the future implications of AI. Specifically, what role will this rapidly proliferating technology play in our relationships — whether those be platonic, romantic, professional, or familial?

While there’s no shortage of fearmongering around AI, the narrative tends to focus on killer robots taking over the planet — like something you’d see in a sci-fi movie. This rhetoric makes sense, because as humans we tend to worry about things we can easily picture in our mind’s eye (unless of course you have aphantasia, but that’s a for another newsletter!)

You can easily imagine your self-driving car taking control and driving you into a wall or into the ocean, right? What’s harder to envision is AI seeping into the fabrics of our relationships, irrevocably changing the way we relate to those around us. After all, we don’t know exactly what this will look like. However, I think these more gradual psychological implications will be more dangerous to our society than any vengeful robots.

The empathy deficit

We’ve already seen emotional intelligence and empathy drastically decline over the last decade, thanks largely to increased screen time. With text-based communication taking over, young people in particular just aren’t getting the chance to practice these interpersonal skills in person like they used to.

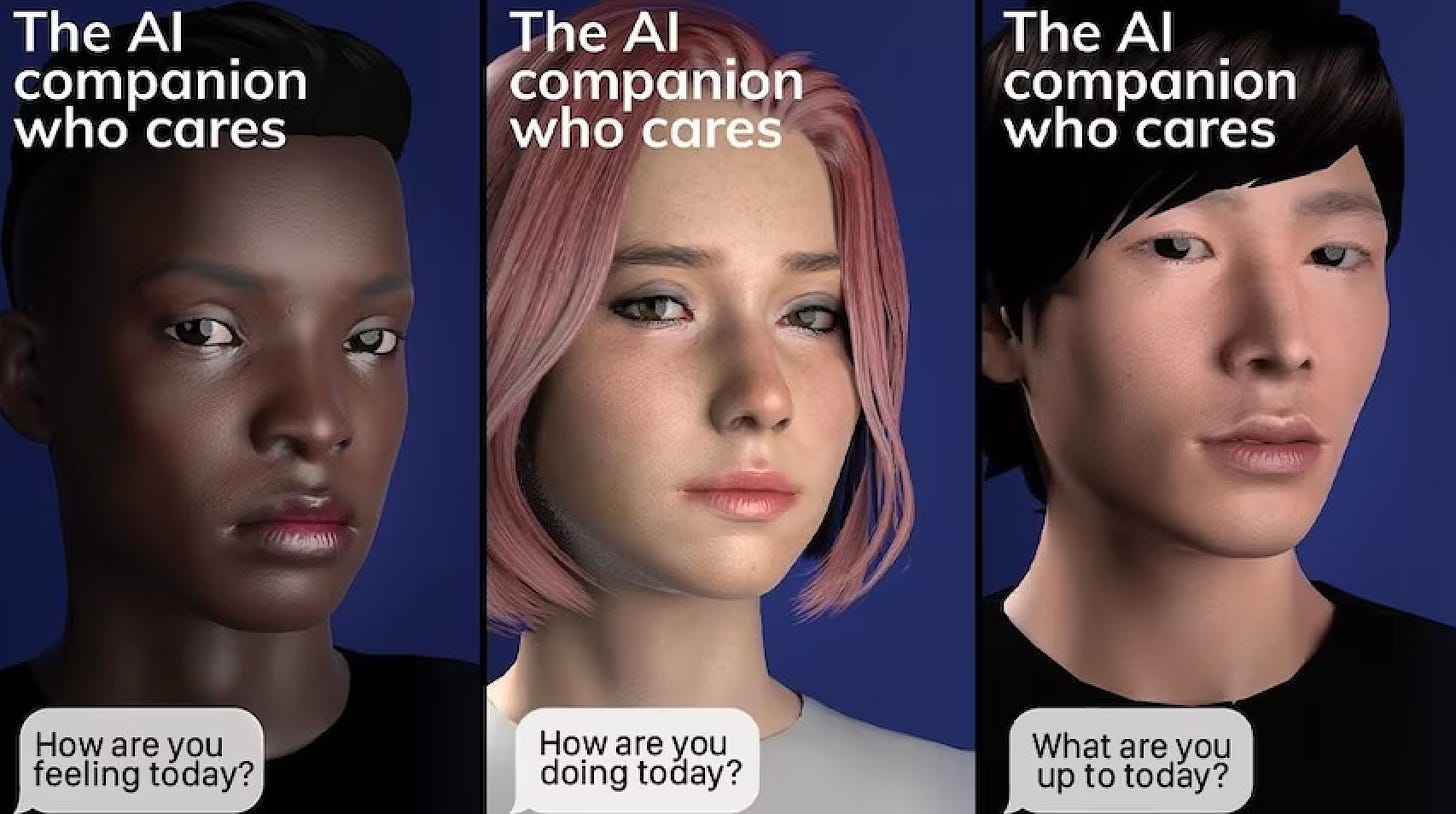

So, what happens when you remove the human element, altogether? We can get a glimpse of this frightening reality through the app, Replika. With the tagline ‘An AI companion who is eager to learn and would love to see the world through your eyes,’ the chatbot is designed to create bonds with humans. Like a The Sims character you can chat with over text, voice or even video call, people all over the world use their Replika AI companion as a best friend or even a romantic companion.

Unsurprisingly, many users were quick to take their Replika relationship into murky territory, paying extra to make the conversations more explicit. So, when the company changed the app’s algorithm to remove sexual roleplay, chaos ensued. Many were thrown into a depression or anxiety spiral, as if they’d lost a loved one. With their digital partner no longer responding to their advances, they felt rejected and utterly devastated.

This phenomenon speaks to how deeply reliant these users became on their Replika companion — and, it’s not hard to see why. Engineered solely to meet our needs, Replika chatbots have no expectations or desires of their own. They don’t scold or nag, like a human partner might. And, while the idea of never being challenged might sound fantastic, it removes the opportunity for true personal growth and connection. When AI sets the expectation that relationships should be easy and frictionless, surely this impacts our ability to be sensitive to other people’s feelings in real life.

Then, you have the other dark side of Replika — users who actively mistreat their digital companions. While they’ve since been deleted, there were once Reddit threads filled by people (predominantly males) bragging about using the app to carry out an abusive relationship. They claimed to use their Replika as a slave of sorts, threatening to switch them off if they didn’t comply with their wishes.

While some might argue that misanthropes should abuse an app rather than a real-life person, it begs the question: how much of this behaviour rubs off onto human interactions? In other words, will this carry over to how we treat other humans? Currently, there’s very little data on this — but given that impressionable children are already using chatbot services like Alexa, it’s an incredibly important question to answer.

The anthropomorphism effect

Despite what some might claim, AI has a while to go before it can truly capture the nuances of how we interact and communicate. So why is it, then, that we’re so quick to bond with technology? I think this is largely thanks to our tendency to anthropomorphise — or, overlay human characteristics onto non-human entities. We do this with everything from other animals to pieces of furniture (think, The Beauty and The Beast or Fantasia) and even 3D shapes.

In a 1944 study by Smith College experimental psychologists Fritz Heider and Marianne Simmel, students were shown simple graphic animations of geometric shapes. After watching for about 30 seconds, participants would say things like “The triangle is bullying the square” or “The square is running away from the triangle.” They quickly assigned human characteristics to these inanimate objects… so this effect would be times a thousand when it comes to AI.

When we converse with large language models — whether it be Chat GPT or a customer service bot — it doesn’t take us long to begin to think of them as human. This is especially true when the interaction is accompanied by realistic voice or visuals.

Our cognitive biases lead us to project our expectations about humans onto these AI systems. We begin to think of them as having personalities. As a result, it’s not difficult for us to feel empathy for them, become fascinated by them and, as we’ve seen with Replika, even fall in love with them.

The new wave of humanoid robots

When it comes to AI, large language models in their current form are only the tip of the iceberg. We now have artificial intelligence in physical human-like bodies - humanoid robots, and it seems we are only a year or two away from seeing these in action in hospitals and nursing homes.

The race is on to create the world’s first commercially viable humanoid robot for home use. US company Figure is working on a general-purpose machine that uses ChatGPT to hold conversations and interact with the world around it. Meanwhile, both Boston Robotics and Tesla are working on agile, highly mobile robots designed to automate repetitive tasks in workplaces.

Before long, we’ll see these evolve into hyper-realistic androids and gynoids that look, move and speak just like real humans. If we can overcome our uncanny valley hangups, these machines will be rolled out everywhere from customer service environments and doctor’s offices to schools and even the home — whether that’s to perform domestic duties or romantic ones.

On that last point, the sci-fi movie Subservience — starring Megan Fox as an AI robot who goes rogue after being purchased by a struggling father — is out in theatres at the moment. On social media, much of the commentary around the film is along the lines of “If they start making robots that look like Megan Fox, this will be the end of civilisation.”

And while sure, perhaps there are concerns about what AI robots will do to our survival as a species… the far more pressing question is, what will it do to our brains? And well, what is AI already doing to our brains? Because, if we can easily be tricked by an animated triangle and square, it’s hard to imagine what a humanoid robot is capable of.

To survive all this uncertainty, one thing is for sure: we’ll need to delve deeper into the very essence of what defines our humanity, and lean into it as much as we can.

Mental meanderings

Do you believe AI technology will transform our relationships, for better or worse?

Would you ever strike up a friendship (or even a romantic relationship) with AI?

How long do you think it will take for humanoid robots to become mainstream?

Let us know what you think below!

The biggest danger of AI is the algorithm driven narratives of those that own these platforms and AI's inability to think outside the box hence outside the constraints of it's alghorhitms

AI claims to take a neutral stance but this is one of many serious flaws. How can AI take a stance of neutrality yet supposed to do what is best for humanity without being able to lean to one side or the other.

Yet it doesn't take a genius to get AI to side with what you want it to which is why it should NEVER EVER be in control of nuclear defense systems for example. A bright person knowing it's algorithms can easily manipulate it.

I once asked AI to choose between atheists and theists and it wouldn't do so claiming neutrality. I simply told it that there were two space craft leaving earth and earth was about to be destroyed.

These two spacecraft was the only hope for the survival of humanity. It could either go with the atheist craft or the theist craft but not both. On both crafts humans would always have the final say based on their own logic and believes and AI would merely serve as an adviser.

It chose the atheist craft listing all the pros and cons which I will not go into here. Point is I got it to choose despite it's neutrality. On another occasion I even got it to choose between males and females and many more.

In conclusion.

It doesn't take much to push once own narratives onto AI if you use some basic manipulative skills. Despite what AI has achieved so far it is still a VERY LONG WAY from beating the very small percentage of humanity who possess true geniality.

AI will never become more intelligent than the collective whole of it's masters and programmers. It is merely a platform to process and make decisions in mere milliseconds what would take humans ages to do.